Five Worlds

Is there one "right" way to do software development? or does it depend on the context?

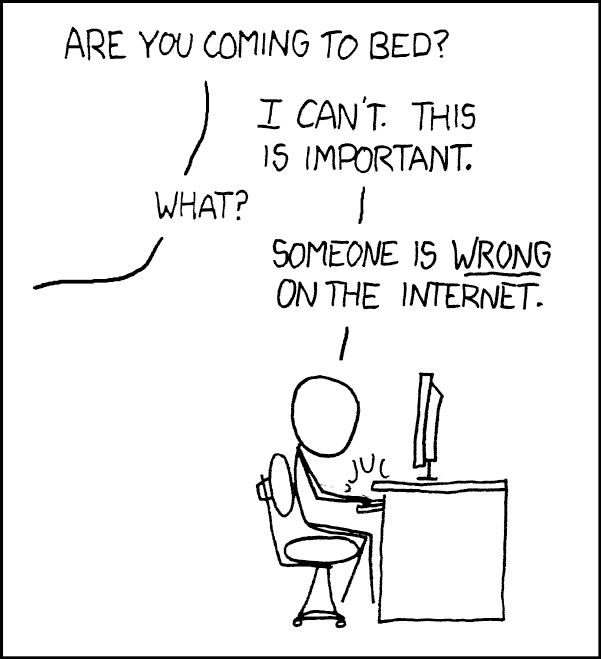

This a continuation of my Sam on Joel series, but it is a little out of order. I last wrote about number 5 in the book, but this is article number 12. I was paging through the book and saw this article and thought it related to my previous article about the LinkedIN boyscouts out there trying to tell the world they are doing everything wrong. Like the guy in the comic above they just can't help themselves. It's never occurred to them that perhaps all software projects are not the same. Joel figured it out 25 years ago.

Unfortunately despite Joel's discovery, it is still being ignored.

Context Matters

As much as some developers may argue they have it all figured out, at best they have it figured out for their context. What they do works well for them. That doesn't mean it generalizes well. Sure certain processes and ideas do, but not everything.

One of the first examples Joel points out is the agile idea that "Customers should be on the team." I admit I am a big fan of this idea and it works well for the types of projects I work on, but there are situations where it can be impractical. Joel mentions working at an e-mail provider which served millions of people. Or one could imagine Microsoft working on Windows, used by everyone from grandmothers, to high school and college kids, nonprofits, tons of businesses of all sizes, big banks, tech giants, and the DOD. Who do you invite onto the team? Certainly not all of them. How do you make sure all their needs are represented? A simple idea gets complicated very quickly when moving from one context to another.

Types of contexts

Joel breaks down the various types of contexts and talks about how they differ from each other.

Shrinkwrap

The first category shows the age of this article. It was written when the majority of commercial software was still distributed on CDs or DVDs in shrink-wrapped boxes.

Distinguishing Features: This category pertains to software that has zillions of users who often have alternatives. The programmers have little control over what machines it runs on and all the various drivers and other software they have installed. In the case of web apps, the programmers have no control over the browser and OS used.

Main Concerns: Ease of use/Good UI. Broad portability. Ease of adding new features to stay competitive.

Joel also adds a couple subcategories with their own caveats

- opensource - Joel puts this as its own separate sub-category due to the fact that the dynamics are different since no one is getting paid, the teams are more dispersed, and work is done asynchronously.

- web-based - He lumps web apps in with shrinkwrap due to the fact that developers have no control over what browser, OS, or even platform (mobile vs. desktop) the user is using.

- consultingware - These are ERP programs, salesforce, CRMs, etc. that require so much customization by consultants that they often end up falling in the next category.

Internal

These are your typical large internal IT projects built for internal corporate users.

Distinguishing Features: Because these are internal and run by the IT department, they have a lot more control over the end users' machines. This may not be as true with more remote work and the advent of "bring your own device" cultures. Also, the audience is captive. There is no competition or alternative. Usage is dictated from on-high.

Main Concerns: UI/UX is not really a priority since users are captive. Mostly getting it done on time and within budget is the key priority (even if they do tend to run over). Continuity/Robustness can also be a concern depending on how critical the system is.

Embedded

This category is about projects that are resource-constrained often running on microcontrollers. Think IOT and OT projects.

Distinguishing Features: Limited resources. Low amounts of storage and RAM, often slower CPUs.

Main Concerns: Often not updateable leads to a strong desire to get it right the first time. Heat and power. Battery life. Efficient and optimized computation and storage.

Games

This category is all about video games.

Distinguishing Features: Joel lists 2. One is the hit-oriented nature of the games industry, which hasn't changed much over the years, although the economics have changed a little with add-ons and in-game purchases. The second distinguishing feature is the idea that there is only one version. I don't think that is as true anymore. Maybe for consoles, but PC games - they get regular updates. Personally, I would say the key distinguishing feature today is the performance orientation, and related to the hit orientation, trying to hit key marketing deadlines.

Main Concerns: Probably a big one is hitting deadlines around console releases. You hear a lot about crunch culture. Performance - lots of games going for photo-realism these days.

Throwaway Stuff

This is fairly self-explanatory but is mostly one-and-done things. A lot of scripting tasks, like for example, converting a bunch of CSV data into JSON or something similar. I would also throw a lot of simple lab experiments that are run once in this category. Also, most code produced by engineering students in college falls in this category.

Distinguishing Features: Generally do just one simple task. Generally used once - at least that is the intent.

Main Concerns: Solve the problem in the quickest way possible. Doesn't need to be very robust or have a good UI/UX. Doesn't need to be as readable or maintainable.

How do Joel's thoughts hold up 25 years later?

His categories might have blurred a little bit and the concerns for some of them might have changed slightly, but I would say overall his observations are still pretty accurate.

My Thoughts

What category do most LabVIEW projects fit in?

I think you can find LabVIEW projects in all the categories. VIPM is a good example of shrinkwrap software because it has a lot of users, although it doesn't have a ton of competition. Most test systems are probably an example of internal software because we have a high level of control over the machines they run on. Any RT or LabVIEW FPGA programs or anything dealing with Arduino or Raspberry Pi would qualify as embedded. Not many people are creating video games with LabVIEW, but there are certainly LabVIEW projects where performance is very important, particularly end-of-line test systems, where the test system may be the bottleneck for the whole assembly line. A lot of code written by grad students in university labs would probably qualify as throwaway code. I'm also sure we've all written throwaway code at some point. Hopefully, we were actually able to throw it away when we were done with it.

What about TDD, CI/CD, etc.?

I often promote TDD, CI/CD, and various other "best" practices. I've found a lot of value in using them on the types of projects that I work on. What I am not saying with this article is that things like TDD, CI/CD, etc. aren't helpful and don't have benefits across all these scenarios. I am saying that the tradeoffs are different. We may have to adapt our processes to the context.

As an example, writing a few end-to-end tests for a piece of throwaway code may be useful. It could help catch some bugs and give the user/programmer the confidence that the code does what they intended. That would be reasonable. However, telling that person that they need a full suite of unit tests for regression testing and that they need to have CI/CD to run the tests for them automatically is probably going to be a hard sell.

Also, I recently wrote about my non-negotiables for software development. I think these still hold for most of the categories up there, throwaway code being the exception. Of course, the application is a little different in each of those categories, but I do believe they all still apply.

What do you think?

Do you agree with Joel's categorization? Do agree with his assessment that each category has different needs? and therefore requires different tools and processes? Are there any tools or processes that you think cut across all categories? What about my non-negotiables? Do you feel they hold across categories (minus throwaway code)?